AI for Predicting the Number of Visitors

A Scandinavian supermarket chain with 80+ supermarkets all over the region.

The daily count of supermarket visitors depends on many factors: day of the week, time of year, upcoming or past holidays, size of the settlement, etc.

It is very important for the administration of each store to plan in advance the number of employees working on the corresponding days. Too many employees at work lead to profit loss due to excess wages; not enough employees mean lost sales and dissatisfaction of visitors.

Besides, until recently, the schedule was drawn up manually by store managers, and it took a lot of time to coordinate work assignments, as the manager had to call the employees by phone. The company's management decided to automate the planning of employee work schedules and use AI to forecast demand.

The challenge of the task is that forecasting systems can usually make high-quality forecasts for 1-4 weeks, while the country's legislation requires employers to inform employees of their schedule at least 4 weeks in advance. This means that the client needs to forecast demand 8 weeks ahead but with the same quality as 1-4-week forecasts, i.e. with 90% accuracy.

Our tasks also included application deployment automation using a Kubernetes cluster.

Predicting the number of visitors using AI

At first, we tried regular time series models, such as ARIMA and Prophet. They demonstrated good generalisation ability*, but still did not provide the required accuracy when forecasting for 8 weeks.

Looking for a better solution, we switched to models using the boosting method, i.e. creating an ensemble of weak (poorly predictive) models to build a strong final model. The advantages of the method include resistance to noise and incorrect data and efficiency when working with small amounts of data. Boosting models are widely used in various fields where high forecasting accuracy is important.

The best choice in our case was the open-source CatBoost model. It allows working effectively with categorical data and uses many hyperparameters* to fine-tune the model. To ensure consistent prediction quality across stores with varying attendance levels, we optimized the model using the RMSE** loss function. We also configured specific parameters to limit the model's «aggressiveness» during sharp data spikes and reduce reliance on random patterns.

We also implemented a data preparation procedure that includes automated anomaly cleaning. Values identified as «noise» (e.g., technical failures or extreme outliers) are replaced to prevent them from distorting future forecasts. Furthermore, for recently opened locations lacking historical data, we implemented a proxy-store matching logic*. This allows the system to generate forecasts based on similar existing stores.

We selected the input data necessary to obtain the result of the required accuracy:

- the sales data from 12 months ago,

- the trend for 12 and 8 weeks,

- contextual data (store area, population, region),

- a feature for taking into account movable holidays.

Since the model only works for the first year, there are cases where the client knows from personal experience that the model's forecast needs to be adjusted for more complex logics than just last year's data. For example, for holidays with a fixed date, demand may depend on the day of the week on which the holiday falls. For movable holidays (Easter and others), the system performs a dynamic date shift to find comparable periods in the previous year.

The UI design was made by Software Country's designer

The UI design was made by Software Country's designer

In addition, we distributed stores into 20 clusters by behaviour factor. For example, in large cities the influx of visitors on peak dates is much stronger than in small towns. Using data from a specific cluster of stores in the forecasting model further improves the accuracy of predictions.

In the final stage, an algorithm for smoothing hourly peaks is applied to the predicted values. The final result is automatically uploaded to the database for subsequent work shift planning.

Web application for work schedule drawing automation

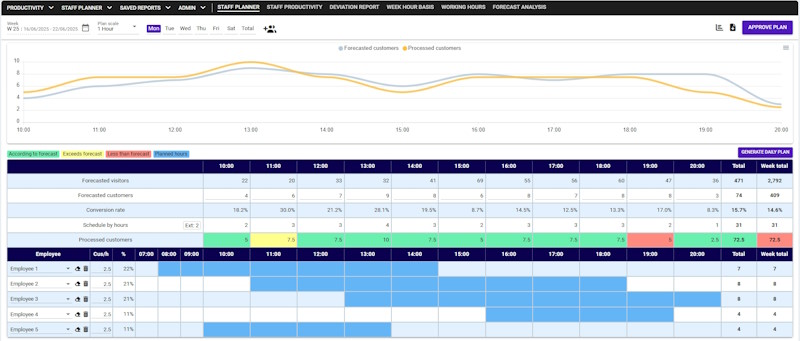

The resulting forecast serves as the foundation for the automated scheduling system. To streamline communication and replace manual phone calls, we developed a custom PWA application* where employees manage their availability and view assigned shifts.

For the scheduling engine itself, we moved beyond simple iterative algorithms. Our solution evaluates all possible shift combinations simultaneously to align personnel with predicted foot traffic. The model operates on a framework of hard constraints (strict legal limits, physical availability) and soft constraints (business goals)*. This structure allows the client to flexibly balance between payroll cost savings and maximum service levels.

Crucially, the system optimizes not just for coverage, but for productivity. Through Team Composition logic, the algorithm analyzes historical performance data (such as SPLH*) to identify employee pairs with high compatibility. By prioritizing these "synergetic" combinations, the schedule ensures that the most effective teams are on the floor during peak hours.

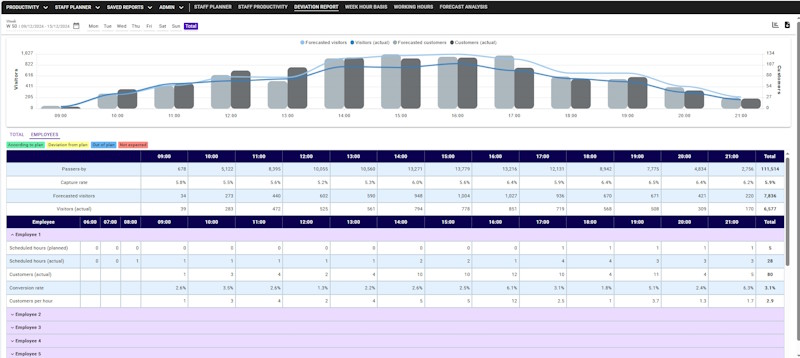

The staff planning application

The staff planning application

Technical reports and efficiency metrics are automatically generated within the app. To ensure accuracy, we implemented a data cleaning stage that filters out statistical noise, such as technical logging errors or zero-sales shifts.

Vue 3 was chosen for the front end to ensure a responsive interface with minimal development costs, while C# on the back end ensures compatibility with the client's mixed Linux and Windows infrastructure.

Application deployment automation using a Kubernetes cluster

Previously, independent pipelines for each application slowed down updates and complicated testing. To solve this, we implemented a GitOps* approach using Flux CD, creating a universal configurable pipeline.

To further accelerate development and ensure stability, we introduced a dynamic feature-branch system. Now, when a developer creates a branch for a specific task, the system automatically deploys an isolated clone of the modified service. Using Istio Service Mesh*, we can route traffic specifically through this temporary instance, allowing us to test new features in a full loop without affecting the main development or production environments. This ensures that tasks do not block each other and updates can be released safely.

We also significantly upgraded our observability stack. Moving beyond simple logging, we implemented a comprehensive monitoring system that includes resource metrics and distributed tracing* via Jaeger UI. This allows us to visualize the full path of every request across microservices, quickly identifying bottlenecks and performance issues.

Security and access control were centralized using Keycloak for Single Sign-On (SSO), and we established a secure storage system for sensitive data (secrets management) to minimize risks.

The result is a fully transparent infrastructure where every system component is covered by metrics, and deployment of all applications takes ~15 minutes.

Currently, the model provides forecasts of the required accuracy (90%) for weeks without holidays. During holiday weeks, the model is adjusted using dynamic date shift logic; this is especially true for movable holidays since the demand for them depends on the season. At the end of the annual cycle, it will be possible to evaluate the overall results of the model's work.

The PWA application successfully automated the schedule approval process, eliminating the need for manual calls. The scheduling algorithm now additionally optimizes for team productivity using the new Team Composition logic.

Deployment automation has been successfully implemented using GitOps, reducing the deployment time to ~15 minutes. The introduction of isolated testing environments significantly increased the stability of releases.

Related Cases

Read allRTSM Solution: Data Ingestion Improvement

Removing issues in data architecture and processing in order to provide a solid foundation for future growth of the platform.

LMS Content Import and Export Feature

A solution for importing and exporting content from / to Moodle and IMSCC platforms.

Content Generation with Copilot Studio and MCP Servers

A solution to help new teachers rapidly adapt to the educational system while providing easy access to the existing content base.